The convergence of Artificial Intelligence and narrative in Simulation

.png)

In today’s rapidly evolving technological landscape, AI continues to advance, presenting engineers with fresh obstacles as they strive to integrate AI into various systems. One of the main complexities lies in recognising that the effectiveness of AI models relies heavily on the quality and sufficiency of the training data. Insufficient, inaccurate and/or biased data can severely impact the model’s evaluations.

This article delves into three primary ways in which AI and simulation intersect meet. Firstly, simulation models offer a solution for addressing the challenge of inadequate data, enabling the synthesis of elusive data. Secondly, AI models serve as estimations for algorithmically intensive, sophisticated high-level-accuracy simulations, often referred to a lower-dimensional simulation. Lastly, AI models are also implemented in integrated systems, such as controls, signal processing and embedded vision, whereby modeling plays a crucial role in the developmental phase.

Engineers are uncovering innovative approaches to enhance the effectiveness of AI models. This article delves into the symbiotic connection between simulation and AI. It will showcase their combined potential in addressing challenges related to time limitations, model dependability, and data quality.

Overcoming Data Challenges Encountered in the Training and Validation of AI Models:

The process of collecting real-world data and creating good, clean, and catalogued data is difficult and time consuming. Engineers also must be mindful of the fact that while most AI models are static (they run using fixed parameter values), they are constantly exposed to new data and that data might not necessarily be captured in the training set.

Projects are more likely to fail without robust data to help train a model, making data preparation a crucial step in the AI workflow. ‘Bad’ data can leave an engineer spending hours trying to determine why the model is not working, without the promise of insightful results.

Simulation can help engineers overcome these challenges. In recent years, data-centric AI has brought the AI community’s focus to the importance of training data. Rather than spending all a project’s time tweaking the AI model’s architecture and parameters, it has been shown that time spent improving the training data can often yield larger improvements in accuracy. The use of simulation to augment existing training data has multiple benefits:

- Computational simulation is in general much less costly than physical experiments

- The engineer has full control over the environment and can simulate scenarios that are difficult or too dangerous to create in the real world

- The simulation gives access to internal states that might not be measured in an experimental setup, which can be very useful when debugging why an AI model doesn’t perform well in certain situations

With a model’s performance so dependent on the quality of the data t is being trained with, engineers can improve outcomes with an iterative process of simulating data, updating an AI model, observing what conditions it cannot predict well, and collecting more simulated data for those conditions.

Using industry tools such as Simulink and Simscape, engineers can generate simulated data that mirrors real-world scenarios. The combination of Simulink and MATLAB enables engineers to simulate their data in the same environment that they build their AI models, meaning they can automate more of the process and not have to worry about switching toolchains.

Overcoming Challenges Encountered in the Approximations of Sophisticated Systems using AI:

When designing algorithms that interact with physical systems, such as an algorithm to control a hydraulic valve, simulation-based model of the system is key to enabling rapid design iteration for your algorithms. In the controls field, this is called the “plant model”, in wireless area it is called “channel model”. In the reinforcement learning field, it’s called the “environment model”. Whatever you call it, the idea is the same: create a simulation-based model that gives you the necessary accuracy to recreate the physical system your algorithms interact with.

The problem with this approach is that to achieve the “necessary accuracy” engineers have historically built high-fidelity models from first principles, which for complex systems can take a long time to both build and simulate. Long-running simulations mean that less design iteration will be possible, meaning there may not be enough time to evaluate potentially better design alternatives.

AI comes into the picture here in that engineers can take that high-fidelity model of the physical system that they’ve built and approximate it with an AI model (are duced-order model). In other situations, they might just train the AI model from experimental data, completely bypassing the creation of a physics-based model. The benefit is that the reduced-order model is much less computationally expensive than the first-principles model, meaning that the engineer can perform more exploration of the design space. And, if a physics-based model of the system does exist, the engineer can always use that model later in the process to validate the design determined using the AI model.

Recent advances in the AI space, such as neural ODEs (Ordinary Differential Equations), combine AI training techniques with models that have physics-based principles embedded within them. Such models can be useful when there are certain aspects of the physical system that the engineer wishes to retain, while approximating the rest of the system with a more data-centric approach.

Overcoming challenges Encountered in Algorithmic Design Using AI:

Engineers in applications like control systems have come to rely more-and-more on simulations when designing their algorithms. In many cases, these engineers are developing virtual sensors, observers that attempt to calculate a value that isn’t directly measured from the available sensors. A variety of approaches are used including linear models and Kalman filters.

But the ability of these methods to capture the nonlinear behavior present in many real-world systems is limited, so engineers are turning to AI-based approaches which have the flexibility to model the complexities. They use data (either measured or simulated) to train an AI model that can predict the unobserved state from the observed states, and then integrate that AI model with the system.

In this case, the AI model is included as part of the controls algorithm that ends up on the physical hardware, which has performance/memory limitations, and typically needs to be programmed in a lower-level language like C/C++. These requirements can impose restrictions on the types of machine learning models that are appropriate for such applications, so the engineers may need to try multiple models and compare trade-offs in accuracy and on-device performance.

At the forefront of research in this area, reinforcement learning takes this approach a step further. Rather than learning just the estimator, reinforcement learning learns the entire control strategy. This has shown to be a powerful technique in some challenging applications such as robotics and autonomous systems but building such a model requires an accurate model of the environment, which may not be readily available, as well as the computational power to run a large number of simulations.

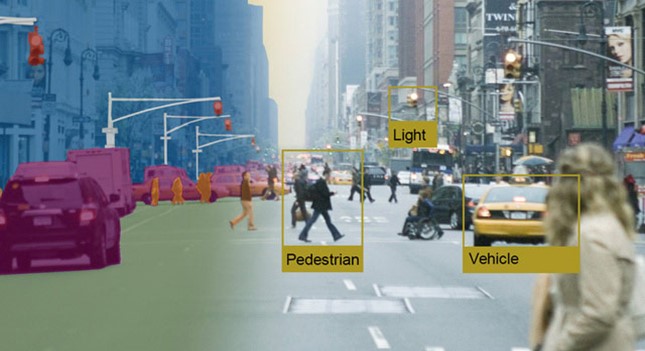

In addition to virtual sensors and reinforcement learning, AI algorithms are increasingly used in embedded vision, audio and signal processing, and wireless applications. For example, in a car with automated driving capabilities, an AI algorithm can detect lane markings on the road to help keep the car centered in the lane. In a hearing aid device, AI algorithms can help enhance speech and suppress noise. In a wireless application, AI algorithms can apply digital predistortion to offset the effects of nonlinearities in a power amplifier. In all these applications, AI algorithms are part of the larger system. Simulation is used for integration testing to ensure the overall design meets requirements.

Prospects of AI modeling

In general, as models become larger and more intricate to cater to intricate tasks, AI and modeling will become even more vital as resources for engineers. Popular industry software such as Simulink and MATLAB have enabled engineers to enhance their work processes and save time by integrating methods like generating synthetic data, employing lower-dimension simulation, and incorporating integrated AI algorithms for tasks like controls, signal processing, integrated visual perception, and wireless technology.

.jpg)

.png)